Camera, metadata, timing, and challenge rules create the input state before any reward logic starts.

Verification is the product.

CHLG turns real-world action into trusted outcomes through structured capture, AI verification, anomaly scoring, review routing, and reward logic. Sport & Fitness + Health run on the same proof engine with different trust postures.

AI validation, anomaly scoring, and review logic test whether the attempt is strong enough to count.

Accepted, flagged, and rejected states route differently so the system does not flatten every attempt into one result.

Capture. Verify. Resolve. Record.

The CHLG model is not just challenge content with rewards added on top. It is a multi-layer verification system that turns real-world actions into trusted outputs.

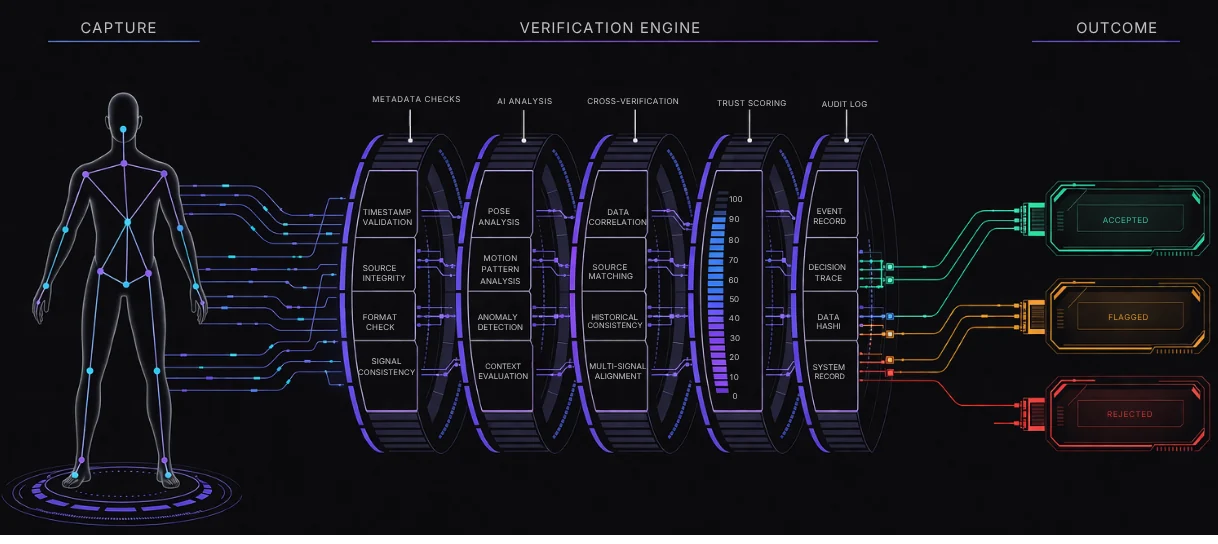

Capture Layer

Camera, metadata, timing, and challenge-specific context create the input state before any reward logic starts.

Verification Engine

Pose analysis, pattern recognition, consistency checks, anti-spoof logic, and anomaly scoring evaluate the proof.

Outcome Layer

The system decides whether the submission is accepted, flagged, or rejected based on the strength of the signals.

On-Chain Trust

Accepted outcomes can update completion, score, streak, wallet state, and future reputation ownership.

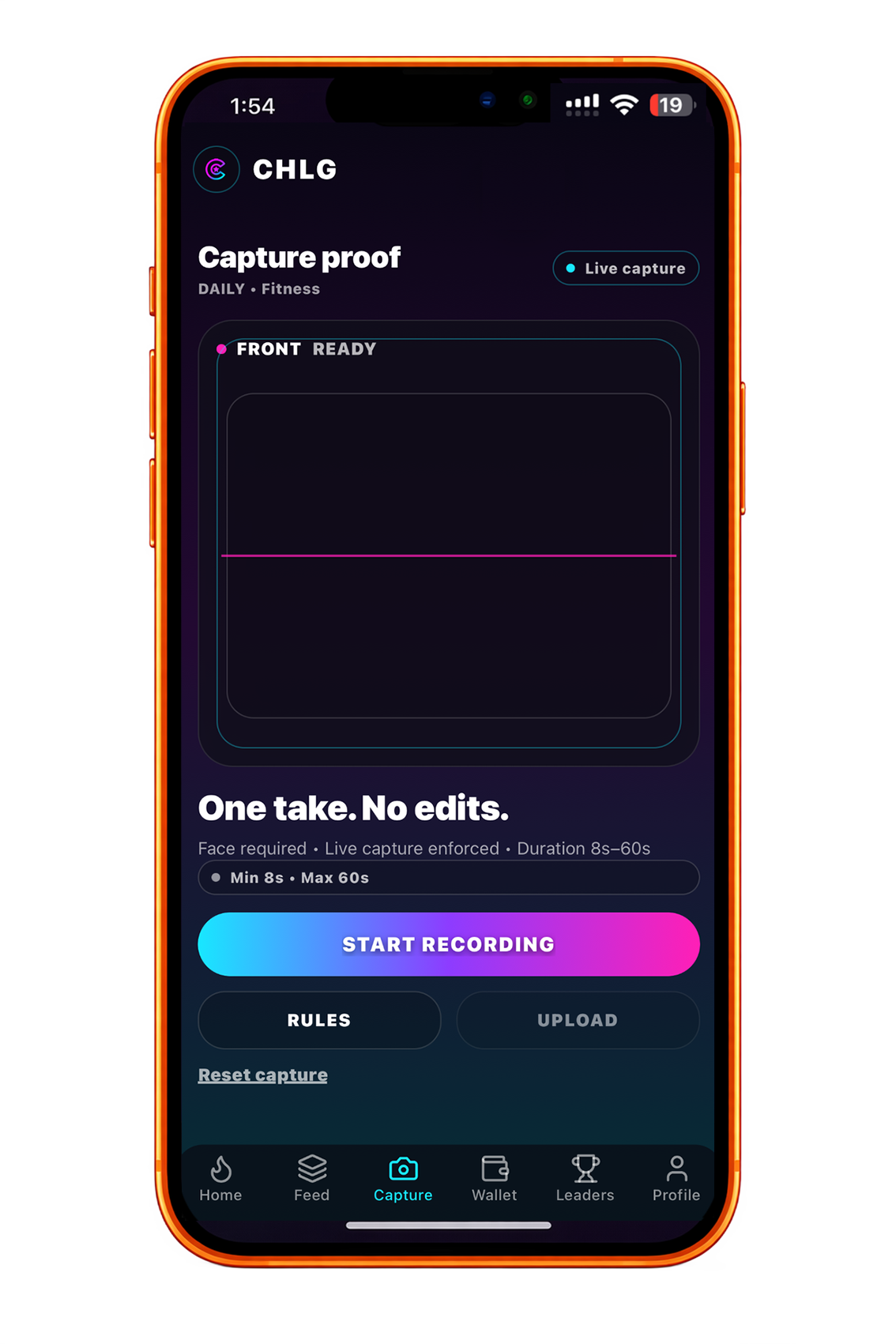

Proof starts before the upload finishes.

CHLG looks at more than a single clip. The capture layer combines camera input, metadata, timing, environment context, and challenge rules so the verification engine has a stronger input than a simple media upload.

The proof screen already knows what challenge is being attempted, what camera view is expected, and how the action should be framed before recording begins.

Recording starts inside a structured flow instead of a blind upload. That gives the verification layer stronger input than a loose clip dropped in after the fact.

Video, timing, metadata, and challenge rules move forward together so the engine can judge a real attempt, not just a file.

The moat is the verification layer.

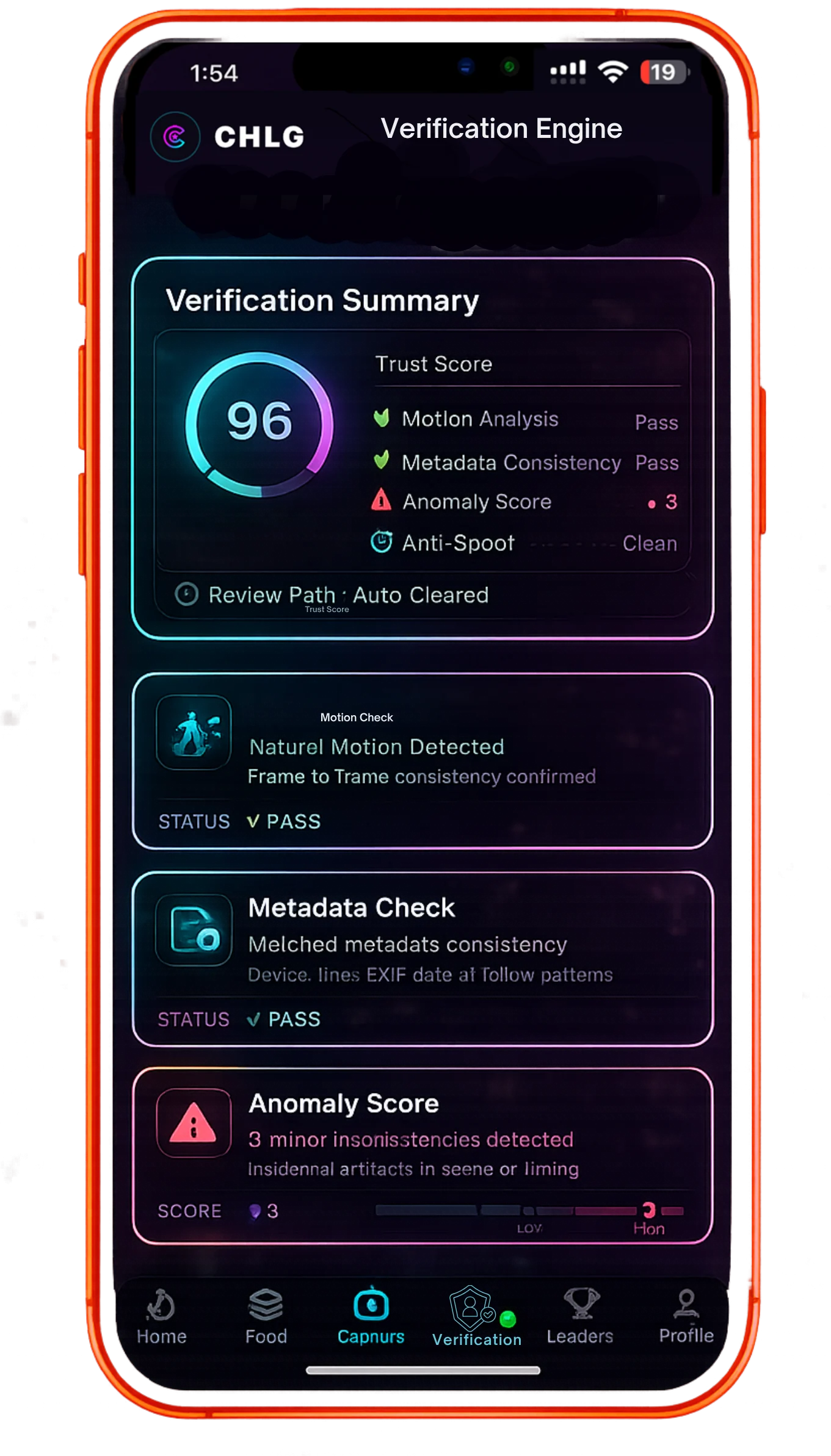

Not every submission should resolve the same way.

A trustworthy system does not force every attempt into the same result. CHLG resolves proof according to signal strength and review posture.

Strong enough to count.

Accepted attempts can confirm completion, update score or streak, unlock leaderboard movement, and route toward rewards.

Ambiguous enough to review.

Flagged attempts can move into reduced trust, delayed reward, or manual review when the system sees uncertainty.

Not valid for the expected flow.

Rejected attempts stay visible as invalid rather than quietly leaking into rewards, reputation, or public challenge status.

See the proof engine in real module context.

Same engine, different verification posture. Sport emphasizes movement, anti-cheat, and leaderboard integrity. Health emphasizes follow-through, safeguards, and review where needed.

See Sport verification in action.

Follow a challenge attempt from capture to verification, then into leaderboard state, streaks, and reward logic.

- Capture: challenge attempt plus video proof

- Checks: movement, timing, and anti-cheat signals

- Outcome: accepted score, rank, and reward update

See Health verification in action.

See how guided journeys use timed check-ins, consent-based proof, and review logic to create a calmer trust model.

- Capture: guided care action or check-in

- Checks: timing, metadata, safeguards, and review

- Outcome: trusted follow-through record

The verification layer is the moat.

Most platforms optimize for reach or activity logging. CHLG is built to optimize for verified outcomes.

Social platforms

Massive reach, strong creator behavior, and native challenge culture, but no trusted way to prove real-world completion.

Fitness and learning apps

Track activity and routines well, but often cannot prove that real-world completion happened in a trustworthy way.

Web3 reward apps

On-chain incentives exist, but weak verification leaves reward systems easy to exploit and difficult to trust.

CHLG

Proof-of-Action verification, portable reward logic, and cross-vertical deployment turn reach into measurable outcomes.

One engine, with Sport & Health first.

The same verification core can route into different economic models, with Sport & Fitness + Health leading the first rollout and other branches following later.

Performance economy

Challenges, leaderboards, creator loops, and public competition are where fast participation and rewards become visible first.

Care participation economy

Recovery, physiotherapy, wellness adherence, and prevention programs need calmer trust-first verification and reporting from the start.

Integrity economy

Gaming remains a future branch where tournament integrity, anti-cheat routing, and platform trust can sit above the game loop.

Credential economy

Education remains a future branch where milestones, micro-credentials, and proctoring become more useful when completion can be trusted beyond screenshots.

Read the proof system, then track the rollout.

CHLG only works if the verification layer is stronger than the hype around it. Review the docs, check transparency, and follow how the proof engine moves into real module deployment.